Emotion in Motion

Key takeaways

We explored the intersection of robotics, emotions, and human-robot interaction through a user elicitation study with Sony Toio robots. Participants generated motions to convey emotion, and we analyzed distance and speed features to extract patterns. The project strengthened my UI/UX research skills and highlighted how motion can bridge emotional communication for non-anthropomorphic robots.

Paper

Download the draft manuscript: Emotion in Motion manuscript

Abstract

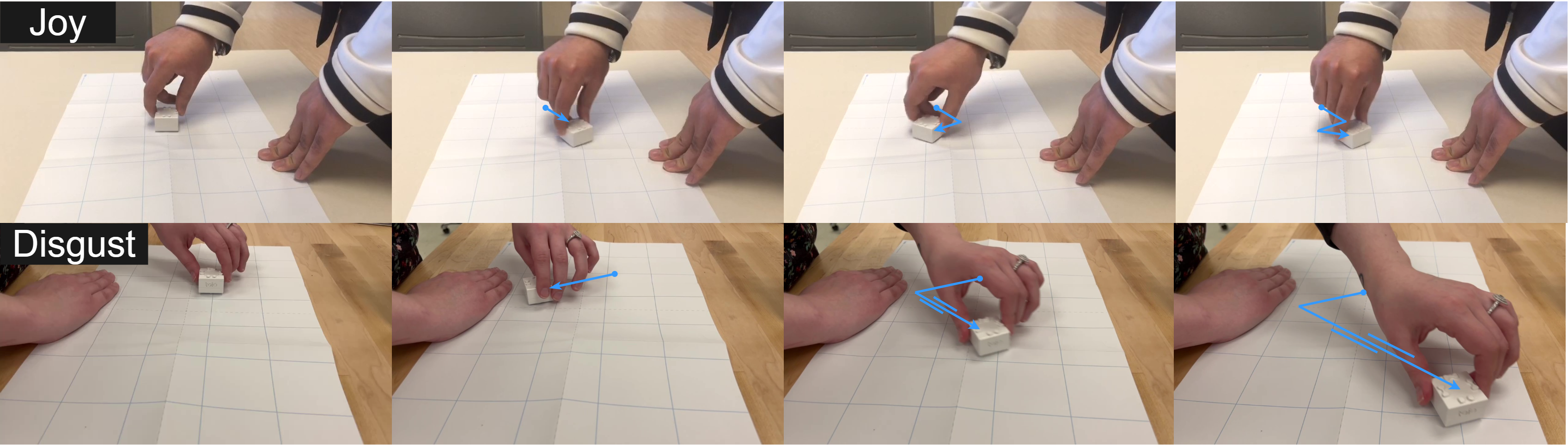

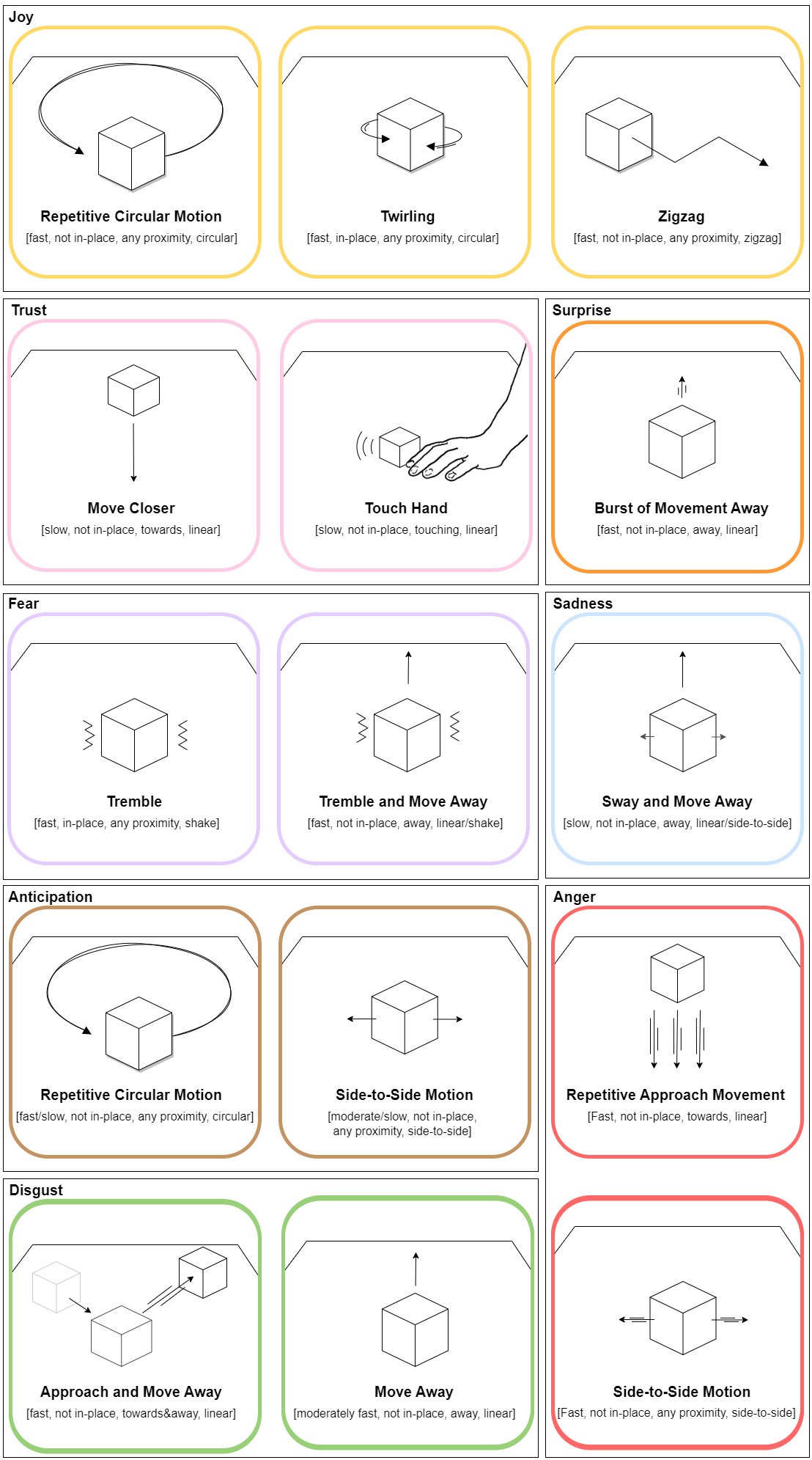

Non-anthropomorphic robots lack human-like expressions, so motion becomes a key communication channel. This study focused on user-defined motions and the emotions those motions convey. Participants controlled robots by hand and created movements for eight emotions. The resulting patterns offer insights into designing more expressive robots for social settings.

Methodology

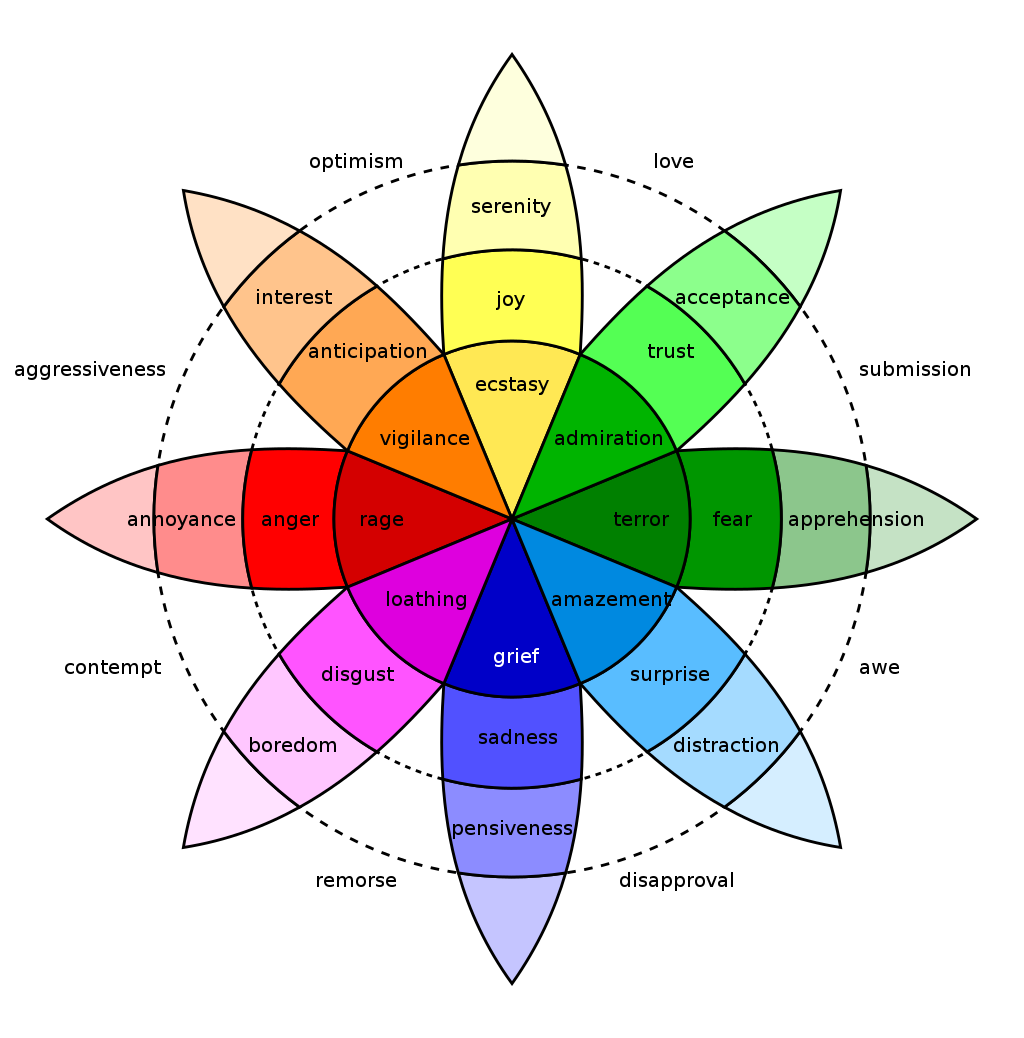

We ran ideation sessions and recorded motion data. Plutchik’s model guided emotion selection, expanding beyond Ekman’s six basic emotions. We extracted features (distance, speed) and analyzed patterns across emotions.

Results

Distinct movement patterns emerged for different emotions. These patterns can inform motion-based emotional expressions in non-anthropomorphic robots.

Conclusion

By uncovering relationships between movement and emotional perception, we can design better interactions between people and robots.

Person at work … check back later.

Enjoy Reading This Article?

Here are some more articles you might like to read next: